KDS - Google Universal Image Embedding

- Competition End time: 2022-10-17

- Submission Format: notebook <= 9h

Key Features

- No training data provided.

- Ensemble cannot easily work. thread

Evaluation

mean Precision @ 5 metric + a small modification to avoid penalizing queries with fewer than 5 expected index images.

\[mP@5 = \frac{1}{Q} \sum_{q=1}^Q \frac{1}{\min(n_q, 5)} \sum_{j=1}^{\min(n_q, 5)} rel_q(j)\]- embedding dimension should be <= 64

- compatible with TensorFlow 2.6.4 or Pytorch 1.11.0

The host will use k-NN (k=5) to lookup for each test sample, using the Euclidean distance between test and index embeddings.

Data

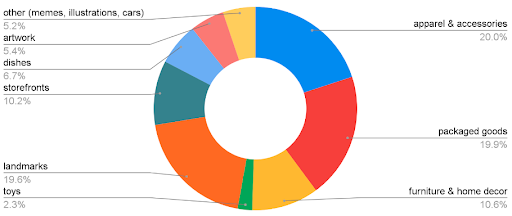

No training data provided. Here is the distribution of test data.

- External data thread: https://www.kaggle.com/competitions/google-universal-image-embedding/discussion/337384

Great Notebooks

-

CLIP-TF-Train-Example

- CLIP + Arcface + TPU training.

-

GCVIT

- Global Context Vision Transformer

-

Understand Comp Domain and ImageNet 21k Labels

- Understanding comp domain and labels

Solutions

-

1st place solution

- Using pre-trained weights w/o training or fine-tuning first.

- CLIP Github

- ArcFace

- Add datasets to training list iteratively to save time and maintain good performance

- unfreeze the backbone after linear head is well trained, so we don’t need to worry about the random linear head would affect the backbone weights

- use 10 times lower initial learning rate.

- Easy to get overfit and linear projection weights jumped sharply.

- So freeze linear head to train and add dropout to fully connection layer.

- Clever ensemble to overcome different F(C, X) issues

- resolution 224 + resolution 280

- LAION-5B CLIP Model blog

-

2nd place solution

- dynamic margin

- stratified learning rate when training non-backbone part.

- 4th place solution

- 5th place solution

- The rest thread